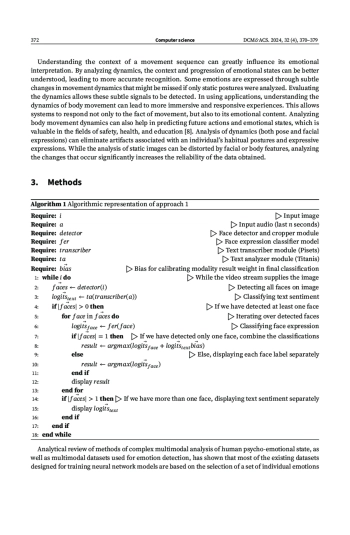

The paper presents a new multimodal approach to analyzing the psycho-emotional state of a person using nonlinear classifiers. The main modalities are the subject’s speech data and video data of facial expressions. Speech is digitized and transcribed using the Scribe library, and then mood cues are extracted using the Titanis sentiment analyzer from the FRC CSC RAS. For visual analysis, two different approaches were implemented: a pre-trained ResNet model for direct sentiment classification from facial expressions, and a deep learning model that integrates ResNet with a graph-based deep neural network for facial recognition. Both approaches have faced challenges related to environmental factors affecting the stability of results. The second approach demonstrated greater flexibility with adjustable classification vocabularies, which facilitated post-deployment calibration. Integration of text and visual data has significantly improved the accuracy and reliability of the analysis of a person’s psycho-emotional state

Идентификаторы и классификаторы

Automatic detection and identification of signs of psychoemotional states are among the topical applied directions of engineering and artificial intelligence technologies development. Such systems make it possible to automate the process of controlling the actions of both individuals and groups of people, including in places of increased danger by timely informing the controlling services.

Список литературы

1. Piana, S., Staglianò, A., Odone, F., Verri, A. & Camurri, A. Real-time Automatic Emotion Recognition from Body Gestures 2014. doi:10.48550/arXiv.1402.5047.

2. Hu, G., Lin, T., Zhao, Y., Lu, G., Wu, Y. & Li, Y. UniMSE: Towards Unified Multimodal Sentiment Analysis and Emotion Recognition. Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing. doi:10.48550/arXiv.2211.11256 (2022).

3. Zhao, J., Zhang, T., Hu, J., Liu, Y., Jin, Q., Wang, X. & Li, H. M3ED: Multi-modal Multi-scene Multi-label Emotional Dialogue Database in Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) (Association for Computational Linguistics, Dublin, Ireland, May, 2022, 2022), 5699–5710. doi:10.18653/v1/2022.acl-long.391.

4. Poria, S., Hazarika, D., Majumder, N., Naik, G., Cambria, E. & Mihalcea, R. MELD: A Multimodal Multi-Party Dataset for Emotion Recognition in Conversations. Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics. doi:10.48550/arXiv.1810.02508 (2018).

5. Ekman, P. Emotion: common characteristics and individual differences. Lecture presented at 8th World Congress of I.O.P. Tampere Finland (1996).

6. Levenson, R. W. The intrapersonal functions of emotion. Cognition & Emotion 13, 481–504 (1999).

7. Keltner, D. & Gross, J. Functional accounts of emotions. Cognition & Emotion 13, 467–480 (1999).

8. Ferdous, A., Bari, A. & Gavrilova, M. Emotion Recognition From Body Movement. IEEE Access. doi:10.1109/ACCESS.2019.2963113 (Dec. 2019).

9. Zadeh, A., Liang, P., Poria, S., Cambria, E. & Morency, L.-P. Multimodal Language Analysis in the Wild: CMU-MOSEI Dataset and Interpretable Dynamic Fusion Graph in Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) (July 2018), 2236–2246. doi:10.18653/v1/P18-1208.

10. Busso, C., Bulut, M. & Lee, C. e. a. IEMOCAP: interactive emotional dyadic motion capture database. Lang Resources & Evaluation 42, 335–359. doi:10.1007/s10579-008-9076-6 (2008).

11. Kossaifi, J. et al. SEWA DB: A Rich Database for Audio-Visual Emotion and Sentiment Research in the Wild. IEEE Transactions on Pattern Analysis and Machine Intelligence 13. doi:10.1109/TPAMI. 2019.2944808 (Oct. 2019).

12. O’Reilly, H., Pigat, D., Fridenson, S., Berggren, S., Tal, S., Golan, O., Bölte, S., Baron-Cohen, S. & Lundqvist, D. The EU-Emotion Stimulus Set: A validation study. Behav Res Methods 48, 567–576. doi:10.3758/s13428-015-0601-4 (2016).

13. Soleymani, M., Lichtenauer, J., Pun, T. & Pantic, M. A Multimodal Database for Affect Recognition and Implicit Tagging. IEEE Transactions on Affective Computing 3, 42–55. doi:10.1109/ T-AFFC.2011.25 (2012).

14. Chou, H. C., Lin, W. C., Chang, L. C., Li, C. C., Ma, H. P. & Lee, C. C. NNIME: The NTHU-NTUA Chinese interactive multimodal emotion corpus in 2017 Seventh International Conference on Affective Computing and Intelligent Interaction (ACII) (2017), 292–298. doi:10.1109/ACII.2017.8273615.

15. Ringeval, F., Sonderegger, A., Sauer, J. & Lalanne, D. Introducing the RECOLA multimodal corpus of remote collaborative and affective interactions in 2013 10th IEEE International Conference and Workshops on Automatic Face and Gesture Recognition (FG) (2013), 1–8. doi:10.1109/FG.2013. 6553805.

16. Reznikova, J. I. Intelligence and language in animals and humans 253 pp. (Yurayt, 2016).

17. Samokhvalov, V. P., Kornetov, A. N., Korobov, A. A. & Kornetov, N. A. Ethology in psychiatry 217 pp. (Health, 1990).

18. Gullett, N., Zajkowska, Z., Walsh, A., Harper, R. & Mondelli, V. Heart rate variability (HRV) as a way to understand associations between the autonomic nervous system (ANS) and affective states: A critical review of the literature. International Journal of Psychophysiology 192, 35–42. doi:10.1016/j.ijpsycho.2023.08.001 (2023).

19. Bondarenko, I. Pisets: A Python library and service for automatic speech recognition and transcribing in Russian and English https://github.com/bond005/pisets.

20. Savchenko, A. V. Facial expression and attributes recognition based on multi-task learning of lightweight neural networks in 2021 IEEE 19th International Symposium on Intelligent Systems and Informatics (SISY) (2021), 119–124.

21. Luo, C., Song, S., Xie, W., Shen, L. & Gunes, H. Learning multi-dimensional edge feature-based au relation graph for facial action unit recognition. arXiv preprint arXiv:2205.01782 (2022).

22. Gajarsky, T. Facetorch: A Python library for analysing faces using PyTorch https://github.com/tomasgajarsky/ facetorch.

23. Deng, J., Guo, J., Ververas, E., Kotsia, I. & Zafeiriou, S. Retinaface: Single-shot multi-level face localisation in the wild in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (2020), 5203–5212.

Выпуск

Другие статьи выпуска

Superconducting properties of twisted tri-layer graphene (TTG) are studied within the scope of the chiral model based on using the unitary matrix

In this article, we propose fourth- and fifth-order two-step iterative methods for solving the systems of nonlinear equations in

Earlier we developed a stable fast numerical algorithm for solving ordinary differential equations of the first order. The method based on the Chebyshev collocation allows solving both initial value problems and problems with a fixed condition at an arbitrary point of the interval with equal success. The algorithm for solving the boundary value problem practically implements a single-pass analogue of the shooting method traditionally used in such cases. In this paper, we extend the developed algorithm to the class of linear ODEs of the second order. Active use of the method of integrating factors and the d’Alembert method allows us to reduce the method for solving second-order equations to a sequence of solutions of a pair of first-order equations. The general solution of the initial or boundary value problem for an inhomogeneous equation of the second order is represented as a sum of basic solutions with unknown constant coefficients. This approach ensures numerical stability, clarity, and simplicity of the algorithm.

The problem of summation of Fourier series in finite form is formulated in the weak sense, which allows one to consider this problem uniformly both for classically convergent and for divergent series. For series with polynomial Fourier coefficients

Various approaches to calculating normal modes of a closed waveguide are considered. A review of the literature was given, a comparison of the two formulations of this problem was made. It is shown that using a self-adjoint formulation of the problem of normal waveguide modes eliminates the occurrence of artifacts associated with the appearance of a small imaginary additive to the eigenvalues. The implementation of this approach for a rectangular waveguide with rectangular inserts in the Sage computer algebra system is presented and tested on hybrid modes of layered waveguides. The tests showed that our program copes well with calculating the points of the dispersion curve corresponding to the hybrid modes of the waveguide.

The paper considers a single-line retrial queueing system with an unreliable server. Queuing systems are called unreliable if their servers may fail from time to time and require restoration (repair), only after which they can resume servicing customers. The input of the system is a simple Poisson flow of customers. The service time and uptime of the server are distributed exponentially. An incoming customer try to get service. The server can be free, busy or under repair. The customer is serviced immediately if the server is free. If it is busy or under repair, the customer goes into orbit. And after a random time it tries to get service again. The study is carried out by the method of asymptotically diffusion analysis under the condition of a large delay of requests in orbit. In this work, the transfer coefficient and diffusion coefficient were found and a diffusion approximation was constructed.

Integrated Access and Backhaul (IAB) technology facilitates the establishment of a compact network by utilizing repeater nodes rather than fully equipped base stations, which subsequently minimizes the expenses associated with the transition towards next-generation networks. The majority of studies focusing on IAB networks rely on simulation tools and the creation of discrete-time models. This paper introduces a mathematical model for the boundary node in an IAB network functioning in half-duplex mode. The proposed model is structured as a polling service system with a dual-queue setup, represented as a random process in continuous time, and is examined through the lens of queueing theory, integral transforms, and generating functions (GF). As a result, analytical expressions were obtained for the GF, marginal distribution, as well as the mean and variance of the number of requests in the queues, which correspond to packets pending transmission by the relay node via access and backhaul channels.

We describe introduced in the journal the rubric system. We describe the general structure of an IMRAD research publication. The IMRAD structure for a research article is described in detail.

Статистика статьи

Статистика просмотров за 2025 год.

Издательство

- Издательство

- РУДН

- Регион

- Россия, Москва

- Почтовый адрес

- 117198, г. Москва, ул. Миклухо-Маклая, д. 6

- Юр. адрес

- 117198, г Москва, Обручевский р-н, ул Миклухо-Маклая, д 6

- ФИО

- Ястребов Олег Александрович (РЕКТОР)

- E-mail адрес

- rector@rudn.ru

- Контактный телефон

- +7 (495) 4347027

- Сайт

- https://www.rudn.ru/